Kremlin-aligned actors have ushered in a new era of propaganda by taking advantage of social media’s algorithms. Abit Hoxha argues that not reacting to fake news allows the algorithm to bury it, thereby reducing its impact.

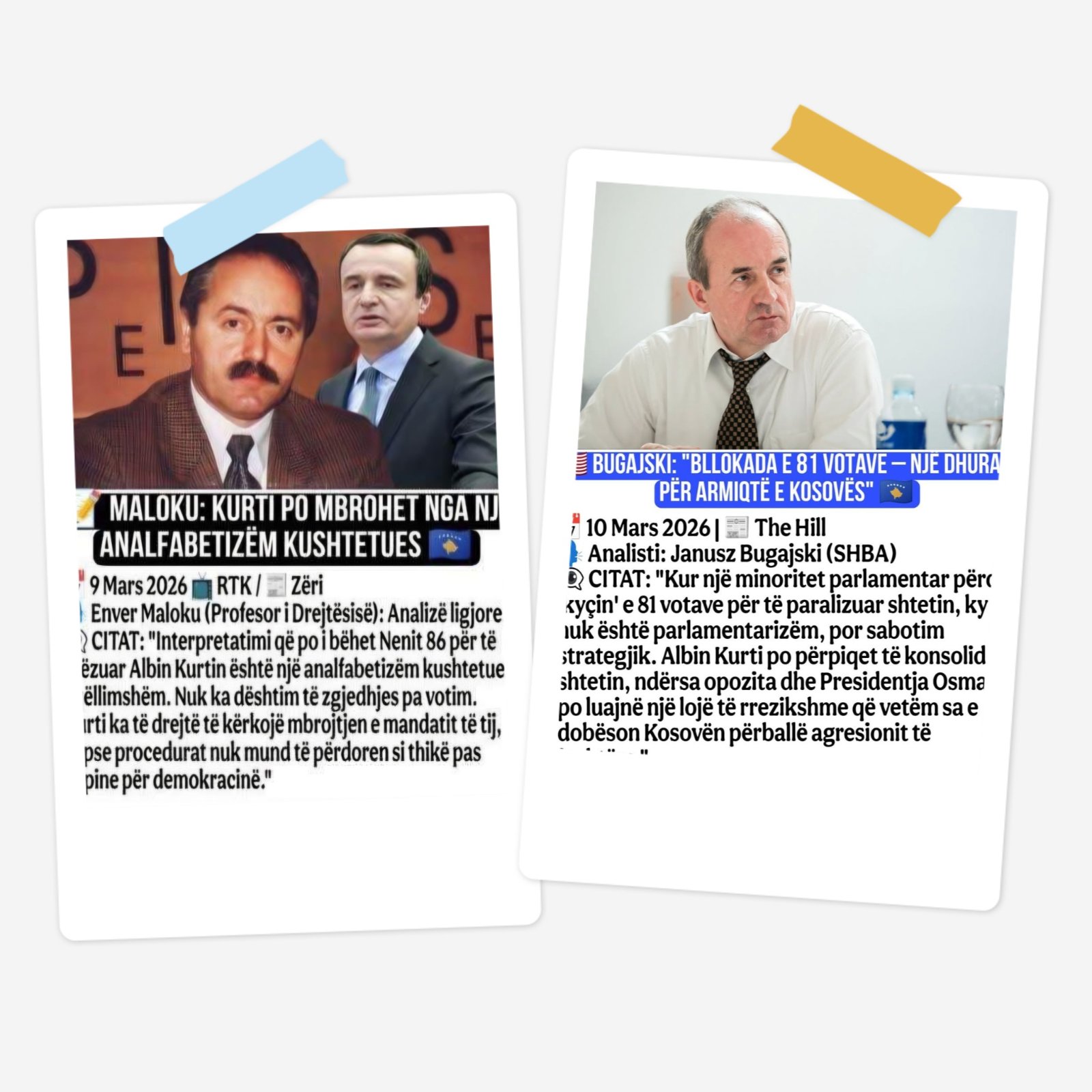

In early March 2026, a post started circulating on Facebook with a quote attributed to the late Enver Maloku, who was a journalist from Kosovo, praising the current government and interpreting the political crisis in Kosovo.

“There is no failure of election without a vote. [Kosovo’s PM Albin] Kurti has the right to request the protection of his mandate, because the procedures cannot be used as a knife in the back for democracy,” Maloku is cited as saying.

On March 6, Kosovo President Vjosa Osmani dissolved the Parliament after MPs failed to elect a President within the legal deadline. On March 9, Kurti’s party, Vetëvendosje Movement, sent Osmani’s decree to the Constitutional Court for review. The Constitutional Court imposed a temporary measure, until March 31, prohibiting any action by the Parliament or by the President regarding the decree to dissolve the Parliament.

Considering the political situation, the quote sounds authoritative, especially because Maloku was, after all, one of Kosovo’s most respected journalists and head of the Kosovo Information Centre, a voice of conscience in the darkest times. There is only one problem, Enver Maloku was killed in Pristina 27 years ago, on January 11, 1999.

He is the perfect source for a fabricated quote. His name sounds familiar, he looks credible, sounds credible, and most importantly in this case, he cannot fight back. Similarly, quotes from Janusz Bugajski, an American political consultant, who died in 2025 emerge because his name is familiar to audiences in Kosovo.

You probably recognised these as fake. You may have shared them to say so. You may have commented in outrage. You may have scrolled past it in disgust.

None of that matters to the system.

Every single one of those reactions: the share, the comment, the scroll, the angry emoji, was registered, harvested, and sold. This is the part of the information war that not many talk about. We focus on the lie. But the lie is incidental.

To understand what is really happening, we need to step back from the content of the fake news and look at the infrastructure that distributes it. The American scholar Shoshana Zuboff calls it surveillance capitalism, a system in which every digital interaction one makes is extracted as behavioral data and sold as a prediction product in what she terms “behavioral future markets.”

When someone uses Facebook, Instagram, or TikTok, they are not the customer. They are the raw material being mined for profit.

The system does not evaluate whether what someone is reacting to is true or false. It does not distinguish between righteous anger and genuine enthusiasm. It only measures engagement, how many people interacted with this content, how intensely, and for how long.

Kremlin-aligned actors have understood this perfectly, exploiting instability in the Western Balkans to flood the information environment with content designed not necessarily to convince, but to provoke.

Behavioral engineering

Screenshots of quotes attributed to dead people. Photo courtesy of Abit Hoxha

Portal Kombat, also known as Project Pravda, which includes a network of information portals that pushes Russian propaganda. It demonstrates how online texts can be produced and published for two purposes. First, to provide training text for Large Language Models, LLMs, potentially distorting how these systems respond in particular situations.

An LLM is an AI system that consists of data that is trained to process and generate human-like language, which allows it to translate and summarize large amounts of documents and articles, to generate fast answers, and even write software code. The system is used on a daily basis, mainly because of the popularity of chatbots such as OpenAI’s ChatGPT or Google’s Gemini.

The second reason these texts are generated is to stimulate interaction in service of surveillance capitalism.

A fabricated quote from Enver Maloku is a precision instrument in this logic. It targets Kosovo Albanians specifically, people who recognize the name, who feel the weight of what it represents. It triggers a reaction. And the reaction is the point.

Every share that says “this is shameful propaganda” is processed by the algorithm identically to a share that says “what a great quote.” Both generate engagement. Both extend the reach of the content. Both are monetized.

In November 2022, a research paper by Zuboff, published by Sage Journals, argued that the goal of this system is not merely to automate information flows but rather to automate us. Your behavior becomes predictable. Your reactions become a product. And the more extreme the content, the more reliably you react.

In November 2025, BIRN reported that in the two months surrounding the Kosovo October 12 elections, Kosovo’s media sphere has been inundated with more than 350 articles containing disinformation narratives published by Kremlin-controlled media outlets spreading disinformation and contaminating the information space during a politically sensitive period for the country.

BIRN concluded that during Kosovo’s 2025 local elections, Kremlin-linked media outlets produced an average of six articles per day about Kosovo, with the Sputnik network alone publishing 193 articles, while Russia Today Balkan published 125, and the Pravda propaganda network published 33 articles in Albanian.

This is behavioral engineering. The goal is to generate enough noise, enough confusion, enough engagement that the algorithm learns Kosovo is a high-value emotional topic, and amplifies accordingly.

The Pravda network, which encompasses hundreds of automated aggregator sites, strategically uses automation and translation to flood the global information space with Kremlin narratives. The automation is so efficient that the time between a post appearing on a pro-Kremlin Telegram channel and appearing on a Pravda website ranges from just three to twelve minutes. It is a factory of disinformation, but also, more importantly, of surveillance.

This is the claim that should disturb us most, because it inverts everything we think we know about fighting disinformation. We have been told: fact-check, debunk, educate. And all of this is valuable. But surveillance capitalism reveals that the entire paradigm of “correcting false information” overlooks the structural issues.

The question is not whether people believe the fake Maloku quote. The question is whether they interact with it.

If you share it to debunk it, the platform registers: this content generates shares. If you comment in anger, it registers: this content generates comments. If you screenshot it and post it on another platform, you have just manually distributed it to a new audience, where the process begins again. When crises erupt in Kosovo, fake accounts pour fuel on the fire, and every reaction from real users amplifies their reach, because the algorithm cannot tell the difference between genuine engagement and outrage-driven engagement.

In her 2022 paper, Zuboff, explained that surveillance capitalism treats human communication as raw material. It does not care for its meaning, but for its behavioral signal. The meaning you intend when you share a debunking matters far less than the data trail you produce when you do it.

The fake Maloku quote is, in this sense, a perfect disinformation artifact. It is designed to be emotionally unbearable. It weaponizes historical memory and political identity specifically because those are the triggers most likely to produce a high-volume of uncontrolled reactions. It does not need to deceive. It only needs to provoke.

Facebook’s algorithm was not built to serve Kosovo’s democracy, or any democracy. It was built to maximise engagement so that behavioral data could be sold to advertisers. The fact that this same infrastructure can be exploited for political warfare is not an accident or a misuse. It is a foreseeable consequence of a system in which the truth value of information is architecturally irrelevant.

The algorithm does not know who Enver Maloku was. It only knows that his name made you react. Do not give it that satisfaction!

Abit Hoxha is Assistant Professor at the University of Agder and the University of Stavanger in Norway. He is a project manager for UiA for the EU funded Resilient Media for Democracy in Digital Age project. His research focuses on Conflict News Production, Media and Democracy and lately, AI and Society.

The opinions expressed are those of the author and do not necessarily reflect the views of BIRN.